The Incident That Should Terrify Every SaaS Founder

In January 2024, a password manager called Passwordstate pushed what it thought was a routine update to its enterprise customers. Instead, it delivered malware that harvested credentials from over 29,000 corporate users. When the breach came to light, the company's response was almost reflexive: "We were hacked. Our servers were compromised by sophisticated threat actors."

It didn't matter. Within weeks, the company faced a tsunami of litigation, regulatory investigations, and customer defections. The "we got hacked" defense—the one startup founders assume will shield them from liability—crumbled on contact with reality.

If you're shipping software, you need to understand why.

Why "We Were Victims Too" Doesn't Work

The instinct to claim victim status after a breach is understandable. You didn't intend to distribute malware. You were deceived by attackers. Surely the law recognizes that distinction?

It does—but not in the way founders expect.

Modern product liability law evaluates whether you took reasonable precautions to prevent foreseeable harms. When your software update mechanism becomes a malware distribution channel, courts don't ask "did you intend this?" They ask "should you have prevented this?"

The answer is almost always yes. Code signing, integrity verification, and secure update pipelines aren't cutting-edge innovations—they're baseline expectations. When you skip them, you're not an innocent victim. You're a company that chose to save engineering time at the cost of customer security.

The Reasonable Care Standard

Here's where founders get blindsided. Negligence claims don't require proving you acted maliciously. They require proving you failed to meet the standard of care that a reasonable company in your position would have maintained.

If other companies in your industry implement code signing, air-gapped build systems, and cryptographic verification of updates—and you don't—you've created a liability gap. The sophistication of the attacker becomes irrelevant. What matters is whether industry-standard defenses would have stopped them.

For the Passwordstate breach, the attack vector was a compromised update server. A signed update mechanism with integrity checks would have caught the tampered payload before it reached customers. That single architectural decision—or the absence of it—defined the entire legal exposure.

The Contractual Trap

Many founders believe their Terms of Service and liability limitations will protect them. "We explicitly disclaim liability for security incidents," they'll say, pointing to Section 14(b) of their customer agreement.

There are two problems with this assumption.

First, most jurisdictions refuse to enforce liability waivers for gross negligence. If your security practices fall significantly below industry standards, courts will treat your limitations as unenforceable against public policy. You can't contract your way out of recklessness.

Second, your enterprise customers have lawyers who negotiate contracts. Those mutual indemnification clauses and carve-outs for "willful misconduct" create pathways around your standard limitations. When a Fortune 500 company's credentials get harvested through your update mechanism, they're not bound by the clickwrap agreement you show to free-tier users.

Regulatory Exposure Multiplies the Damage

Beyond private litigation, security incidents trigger regulatory scrutiny that your Terms of Service can't touch. The FTC has enforcement authority over companies that engage in unfair or deceptive practices—and promising security you don't deliver qualifies as both.

If your marketing says "enterprise-grade security" but your build pipeline runs on a shared server with default credentials, you've made a representation you can't support. Post-breach, those marketing claims become evidence in enforcement actions that can result in consent decrees, mandatory security audits, and ongoing compliance requirements that cost more than the engineering investments you were trying to avoid.

What Founders Need to Implement

The good news is that the security measures that create legal protection are well-understood and increasingly automated. Here's the minimum viable security posture for any company shipping software updates:

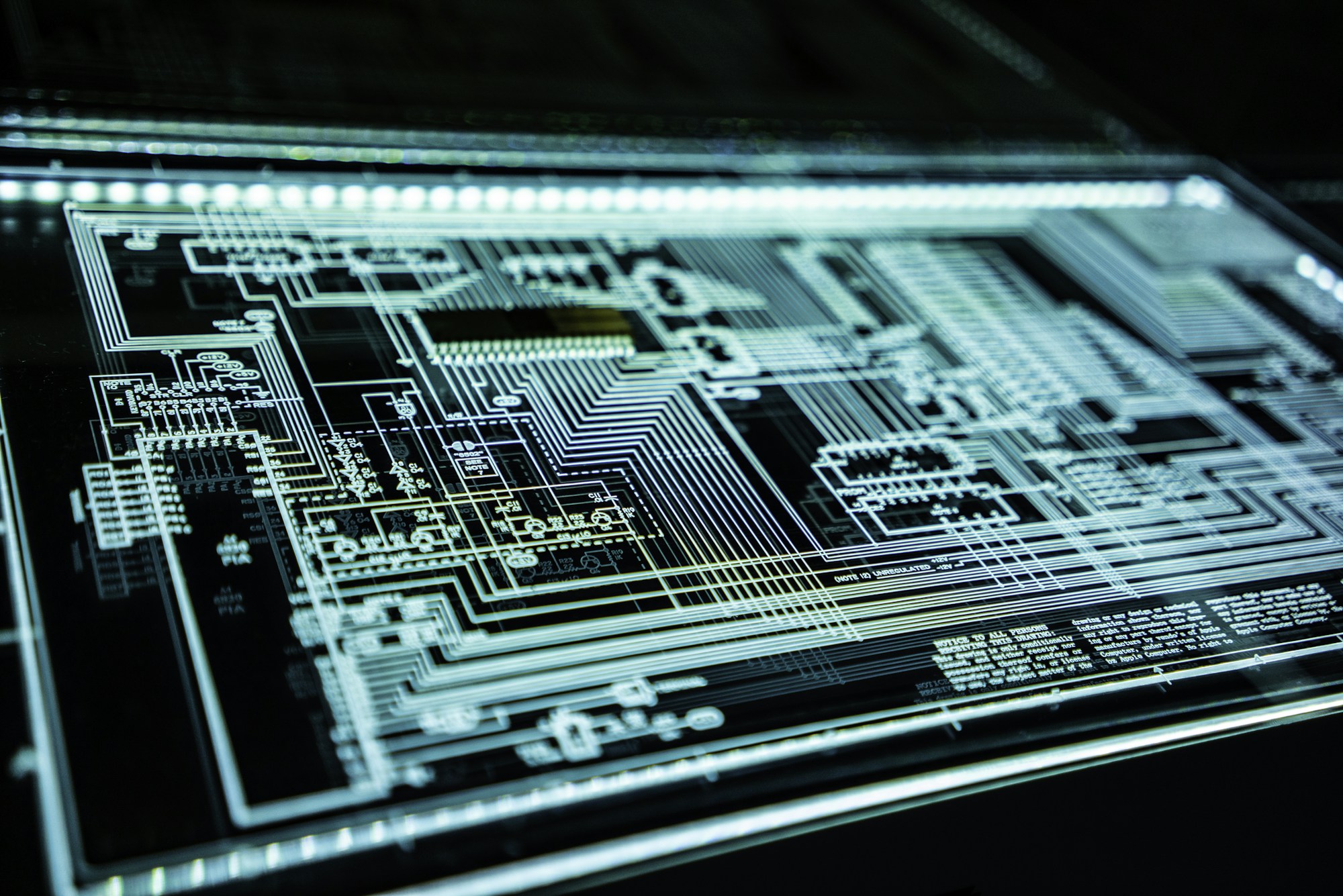

Code Signing Infrastructure

Every binary you distribute should be cryptographically signed with a key stored in a hardware security module (HSM) or secure key management service. The signing process should be isolated from your general development environment. No developer laptop should have direct access to production signing keys.

Build Pipeline Integrity

Your CI/CD system should produce reproducible builds from source control with cryptographic verification at each stage. Compromise of any single system shouldn't enable injection of malicious code into distributed binaries. This isn't paranoia—it's the SolarWinds lesson that every security professional now treats as foundational.

Update Verification

Your client applications should verify signatures before applying updates. If a tampered update somehow reaches your distribution servers, the client should reject it. Defense in depth means assuming every layer will be breached and building accordingly.

Incident Response Documentation

You need a documented incident response plan that you've actually tested. When regulators investigate a breach, they evaluate not just what happened but how you responded. Companies that demonstrate clear, rehearsed procedures receive materially different treatment than those that improvise under pressure.

The Founder's Calculation

I talk to founders who view security investments as pure cost centers—engineering time that could be spent on features customers actually request. This framing misses the asymmetry of outcomes.

The probability of a serious supply-chain compromise in any given year is relatively low. But the consequences when it happens are existential. Litigation expenses, regulatory penalties, and customer flight can exceed your company's entire valuation. Your cyber insurance—if you have it—probably contains exclusions for incidents arising from failure to implement "reasonable security measures."

The companies that survive security incidents are invariably those that can demonstrate they took reasonable precautions. Not perfect precautions—perfection is neither achievable nor expected. But documented, systematic efforts to implement industry-standard protections.

When the breach happens—and probability suggests it eventually will—the question won't be whether you were victimized by sophisticated attackers. It will be whether you built the walls that any reasonable company would have built.

The "we got hacked" defense assumes victimhood is a shield. It isn't. It's a confession that you left the door open.