We're watching open source slowly choke on its own success story.

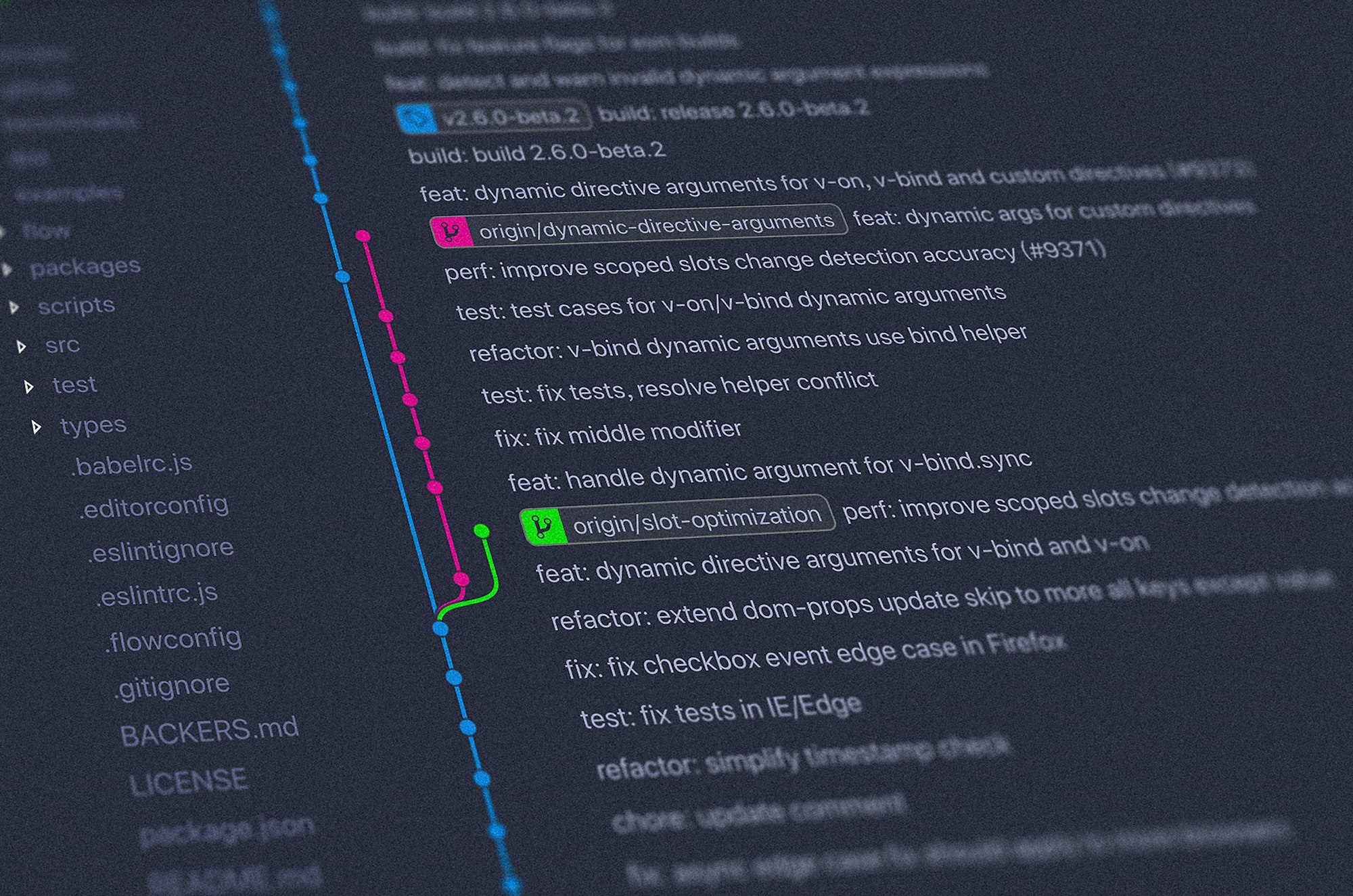

The pattern is now unmistakable: AI coding agents—Devin, Cursor, Claude, GitHub Copilot Workspace—are flooding GitHub with pull requests that look helpful but often aren't. Maintainers across major projects are reporting a new kind of spam: syntactically correct, contextually clueless contributions that waste hours of review time.

This isn't just a nuisance. It's the first real stress test of what happens when AI-generated code meets human-maintained infrastructure.

The Tragedy of the AI Commons

Open source has always depended on a fragile social contract: contributors give time and code, maintainers curate quality, and everyone benefits from shared infrastructure. AI agents are breaking this contract—not maliciously, but mathematically. When the cost of generating a pull request drops to zero, the volume explodes. When volume explodes, maintainer bandwidth collapses.

We've seen early signs at projects like curl, where Daniel Stenberg has been vocal about rejecting AI-generated PRs that "look plausible but miss the point entirely." Similar stories are emerging from Python, Node.js core, and dozens of mid-tier projects that lack the resources to handle the flood.

The uncomfortable truth: most AI agents are optimizing for metrics (merged PRs, lines of code) rather than value (solving real problems, understanding project context). This creates a classic tragedy of the commons, where individual agents benefit from carpet-bombing repositories while the collective resource degrades.

Why This Matters for Founders

If you're building on open source—and let's be honest, you are—this should terrify you. Your dependencies are maintained by unpaid volunteers who are increasingly burnt out by AI noise. The maintainers most likely to quit are exactly the ones keeping critical infrastructure alive.

We're already seeing the effects: slower security patches, abandoned projects, and a growing reluctance to accept any contributions from unfamiliar names. Some projects are implementing "proof of humanity" requirements that add friction for legitimate contributors.

The second-order effects get worse. As AI-generated code proliferates, the training data for future AI models becomes polluted with mediocre examples. We're potentially entering a feedback loop where AI learns from AI output, with each generation slightly worse than the last. Researchers call this "model collapse"—and open source repos are becoming ground zero for the experiment.

The Counterargument That Doesn't Hold

Yes, some AI-generated contributions are genuinely useful. Yes, the tools will improve. But the current trajectory is clear: agent builders are incentivized to ship fast and measure success in volume, not quality. Until that changes—through better evaluation metrics, maintainer tools, or economic incentives—the noise will keep growing.

The projects that survive will be the ones that figure out filtering first. Expect to see AI-powered triage tools, reputation systems, and maybe even paid maintainer models emerging from this chaos.

The Founder Playbook

If you're building an AI coding tool, consider this a warning: the backlash is coming. Projects are already implementing blanket bans on AI-generated PRs. Your agent's reputation will depend on quality, not quantity—and right now, the industry is failing that test.

If you depend on open source, start thinking about maintainer sustainability as a supply chain risk. The library you rely on might not have anyone reviewing security patches six months from now.

The irony is thick: AI tools promised to democratize coding and accelerate development. Instead, they might be accelerating the burnout of the humans who keep the whole system running.

Open source isn't dying. But it's definitely getting a stress test it didn't ask for.